|

If you want to spare some time: I won't tell the canonical truth here, so please do not read further if you are seeking for a definite answer in this topic. Most applications of convolutional networks just stick to one network architecture. Let us say, there is a biomedical image segmentation problem and people tend to use the so-called UNET for solving their problem. But why that one specifically? The answers to these questions usually do not satisfy a researcher like me: "because it has been applied successfully before to a range of problems", "because it is straightforward to implement", "because we tried this and it worked well". Even if these answers are not scientific enough, the points make sense and it seems that there is a certain randomness in how preferred architectures are selected for specific tasks.

However, it is important to note the differences between specific image segmentation tasks. Do we want to distinguish a large number of classes in complex RGB images or do we intend to segment large numbers of simple objects in greyscale images? Does track counting resemble distinguishing zebras from horses or lions from tigers or is it something more similar to counting the number of cars on a satellite image? Yes, you got that right and that is why it should not be entirely arbitrary what kind of network one uses for which specific task! Different network architectures are mainly developed because they fit some specific requirements in image analysis better than others. Luckily, some resources, such as the segmentation models website (smp.readthedocs.io/en/latest/index.html) provides some brief hints regarding the preferred network for certain tasks. Even more importantly, their code examples can be implemented such as the user can flexibly switch between a range of network architectures without the need to rewrite the ML script significantly!

0 Comments

Deep learning, machine learning, neural networks, big data. All these terms became extremely popular recently within and outside academia. However, it is often unclear what each term means and what their relationship is. In fact, this is also the case in this project: it is advertised as a machine learning project, but I work on convolutional neural networks and computer vision. Yet, it is not a contradiction. I ask you for your understanding if I do not formulate everything precisely, my task during this fellowship is to learn how to use convolutional neural networks (CNN-s), I am by no means an expert in this field yet. To explain it in a very simple way, artificial intelligence is a group of computer algorithms that mimic human behaviour in specific tasks, such as language or image recognition. Machine learning is a subset of artificial intelligence that allows our system to learn from a given set of data automatically. Deep learning is again a subset of machine learning (and also of artificial intelligence) that refers to the multi-layered structure of the machine-learning algorithm. Convolutional neural networks are a subset of deep learning, which are mostly used for image recognition. Convolution basically extracts features from pictures (e.g. edges, textures, patterns) and converts them to lower dimensions without loosing the feature's characteristics. We need this dimension reduction for computing efficiency: imagine that if we had to work with 1024*1024*3 input data for each feature all the time...yet we can do the same with a linear vector, let's say 1024*1*1 by keeping the same input data characteristics. One can imagine that this is computationally quite intensive even if we apply convolution. Therefore, it is advisable to run machine learning on a GPU. This can either be done on our own GPU (expensive but handy) or through a cloud/cluster.

The question I often got while talking about fission-track dating: why don't you use artificial intelligence? Like a hammer to break a rock, one would think. But the difference is that artificial intelligence is in our case not an "off the shelf" product. While there are extremely useful resources online that we ourselves also prefer to use, everything has to be implemented for our application. This means roughly the following procedure:

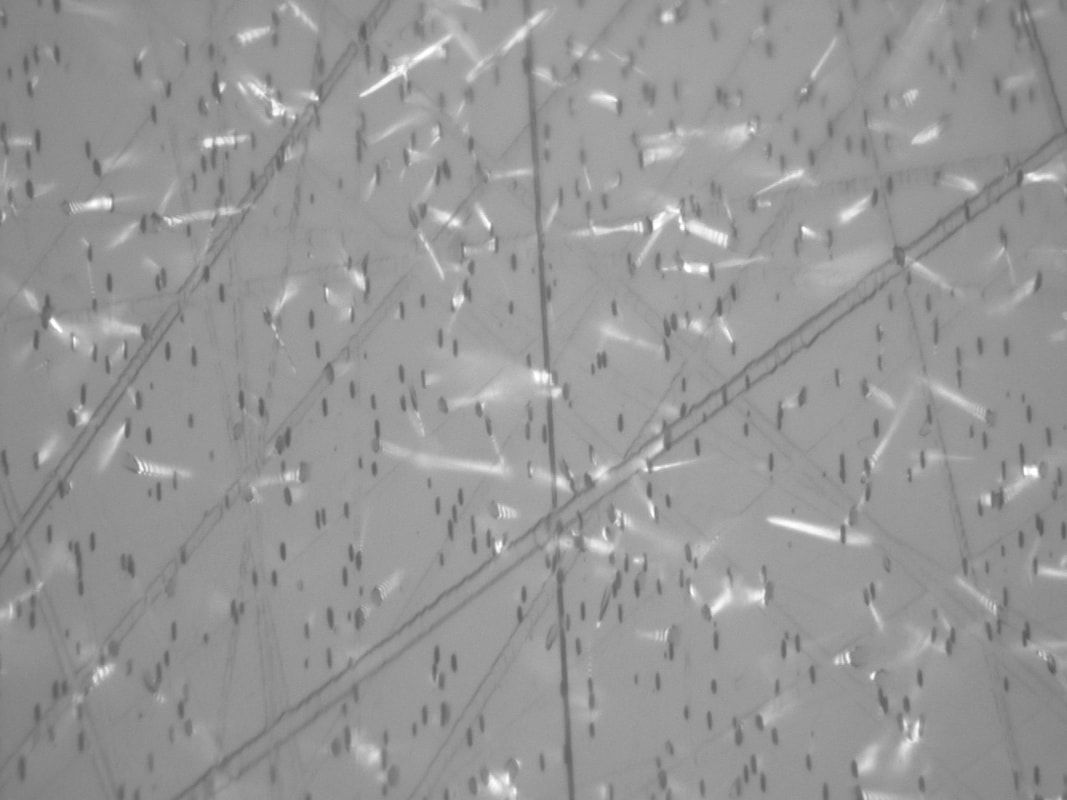

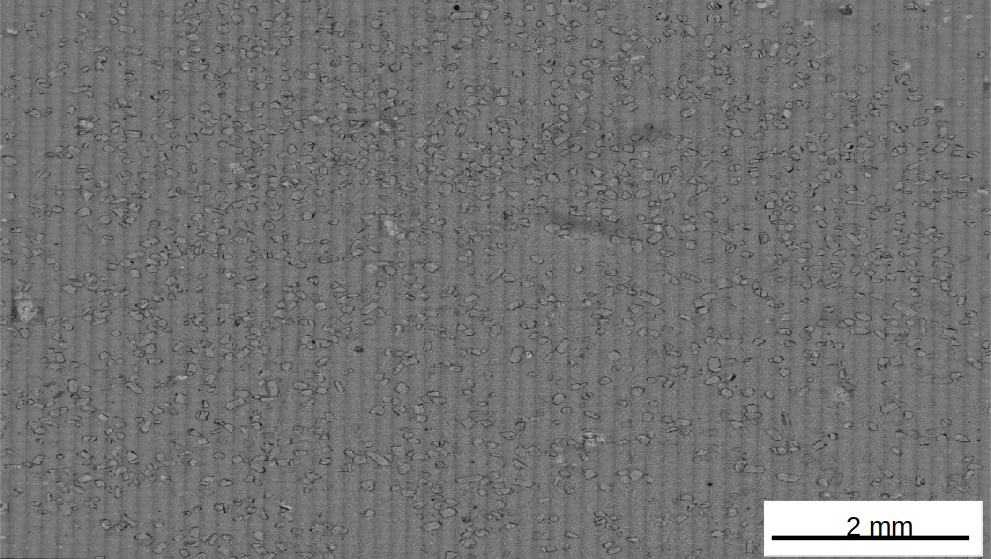

Long time no see. However, work was in progress since that. We have made significant progress in our project, but the code is not ready yet. What difficulties did we have to tackle? Many of them before even starting to use artificial intelligence. Image acquisition: Something that seems extremely obvious. At a magnification of 200x it is, but we need at least 500x. And as some of you might know, geological samples are almost never even. These are not industrially engineered products with high-quality nanosurfaces but the highly variable yet beautiful makings of our Mother Earth. Focusing is okay for individual crystals, but the shallow depth of focus is a serious issue in high-resolution objectives. This means that if our sample is not 100% flat, parts of our image will be out of focus (Fig. 1). This is unacceptable for our task, we have to see our tracks sharply. The funny part is that it is basically no problem if we do our work manually: we just have to adjust the vertical axis on our microscope. One part of the difficulty is that even single images are partly in focus and partly out of focus. The other challenge is that our entire sample consists of many individual images and our camera (mounted on our microscope) needs a common surface for the entire sample. Even with our sophisticated commercial software, we cannot get a nuanced-enough focus surface for such big images. But luckily, we found solutions for both of the challenges although it took us a few months of effort (out of our very valuable Marie-Curie project time). Image stitching: We work at 1000 or 500x magnification with a sub-micron resolution. How many images does that mean? Yes, many, hundreds or even thousands if we consider a standard sample of 1 cm*1 cm (Fig. 2). So each of our samples will consist of many sub-images, which need to be stitched together to be able to train our network. One would think that this is straightforward. However, a commercial stitcher would use a way too large memory for a normal customer like us and they do not have the flexibility we need. So we need to program our own stitcher. Easier said than done, we are not programmers but geologists, but we managed it. (Geologists are the jolly joker natural scientists after all, although employers often don't even know that this profession exists.) Obviously, it was mainly not my own script but my colleague's, I only modified the existing script for my own needs. But we forgot one thing: even the best stages to date struggle to have a sub-micrometer stepping precision. In simple words, it means that even if we set up everything correctly for image shooting, the stepper of our stage might make some small errors, which messes up our image. Think about it: our study objects are about 10-20 um long and 1 um across! So our stitcher program needs to be able to correct for these fairly common errors too. First of all, very briefly about my research area (please see further details on the website): I work on fission tracks. Fission tracks form in natural, uranium bearing minerals as the result of the spontaneous fission of 238U. Of course not only in natural minerals, not only spontaneously (think of e.g. nuclear power plants) and not only by the fission of 238U. But let us focus on the previously described conditions. After a mineral forms and time passes, fission events occur. The more time passes the more fission events occur. This means that if we determine the amount of fission tracks and the uranium content of the mineral, we can calculate a fission-track age! It is a method for dating minerals, rocks and geological events! Depending on the mineral, fission tracks are ca. 10-20 um in length, so fairly small, but still well recognizable with an optical microscope. Let us "look" in the microscope with 1000-times magnification: And here comes my task. I have to count these tracks by looking in the microscope and repeatedly clicking a tally counter. Thousands -- ten thousands of times for a single project, because every project needs 10-15 samples and 10-100 grains/sample counted. The manual counting process is tedious, time-consuming and very subjective.

Ever since I started working on fission tracks, one of the questions that I was most often confronted with: Do you still do it manually? There is machine learning out there! Well, to be honest, that was also one of my basic ideas while writing my proposal. And if you look at the above photograph, it seems very straightforward, right? How many tracks do you see? I think that most of you can count them, and come up with a number around 100-110. But if you really took the time to count them, you might ask: are these "small things" also tracks? And why are some of them so blurry? Well, that is one of the main points. We see a 2D image here, but tracks go in all directions so they are 3D objects. Vertically they look like a circle, whereas flat tracks resemble to cones, just because of different orientations. But we have to count all of them, because their number defines the age of the crystal!! In the microscope we can easily change the focus and go up and down in the image, but that is not so straightforward by analyzing photographs. And if you still don't see the challenge, check the second photograph above. How many tracks do you see :)? Counting this sample would be a routine task in the microscope, but can we train a neural network to do it for us? It was a hard decision while writing my proposal to explicitly state that I do not intend to share my research on social media. Mainly, because I was afraid that this would lead to point deduction in the evaluation process...a strange thought itself, but all of you who has faced similar dilemma knows that these fears are a real problem for many researchers. Thanks to the fair evaluation system of MC proposals, my explanation was accepted and was not subject to point-punishment! So why did I make this decision after all?

Last but not least, before you criticise me for that: I might share this blog on my Researchgate profile. Why? First, because I personally do not consider Researchgate as a social media platform, but I get exclusively professional messages there, which are directly related to my research. Second, because I might want to impress my funder by showing how many people have viewed my project-related blog. But if we come back to the initial idea of this post, is this a decent motivation at all? In September 2020 we submitted our grant proposal with my supervisor, Keno Lünsdorf, for a Marie-Curie Fellowship. That time I was struggling with my actual postdoctoral duties, the construction of our preparation laboratories and I was overwhelmed by the expected birth of our beloved son. So the entire MC project seemed just way too distant, also taking into account the known success rates. To my greatest surprise, the proposal got positive reviews and we got a few months as a family to get prepared for the upcoming adventure. This blog will give insight into the professional and personal aspects of my Marie-Curie Fellowship. As this content is intentionally not posted on social media, I very much appreciate your visit and your time dedicated to read my blog.

|

FTAIGEThis project has received funding from the European |

RSS Feed

RSS Feed